Edge Computing: Hype or Reality?

What is Edge Computing?

The most basic explanation of Edge Computing describes it as the next generation of the geographical location of physical computing infrastructure and architecture, relocating closer to enterprises and people to meet the demand from the next wave of devices and applications – better known as the Internet of Things (IoT) or Industrial IoT (IIoT). In short, data packets move benefits such as low latency, cost reduction, security, and data residency are just a few reasons developers are moving away from cloud computing and to the edge.

The Linux Foundation’s Open Glossary of Edge Computing defines it as the delivery of computing capabilities to the logical extremes of a network in order to improve the performance, security, operating cost, and reliability of applications and services. By shortening the distance between devices and the cloud resources that serve them, and also reducing the number of network hops, edge computing mitigates the latency and bandwidth constraints of today’s internet, ushering in new classes of applications, offering exciting breakthroughs and possibilities. In practical terms, this means distributing new resources and software stacks along the path between today’s centralized data centers and the increasingly large number of deployed nodes in the field, on both the service provider and user sides of the last mile network.

How does edge computing work?

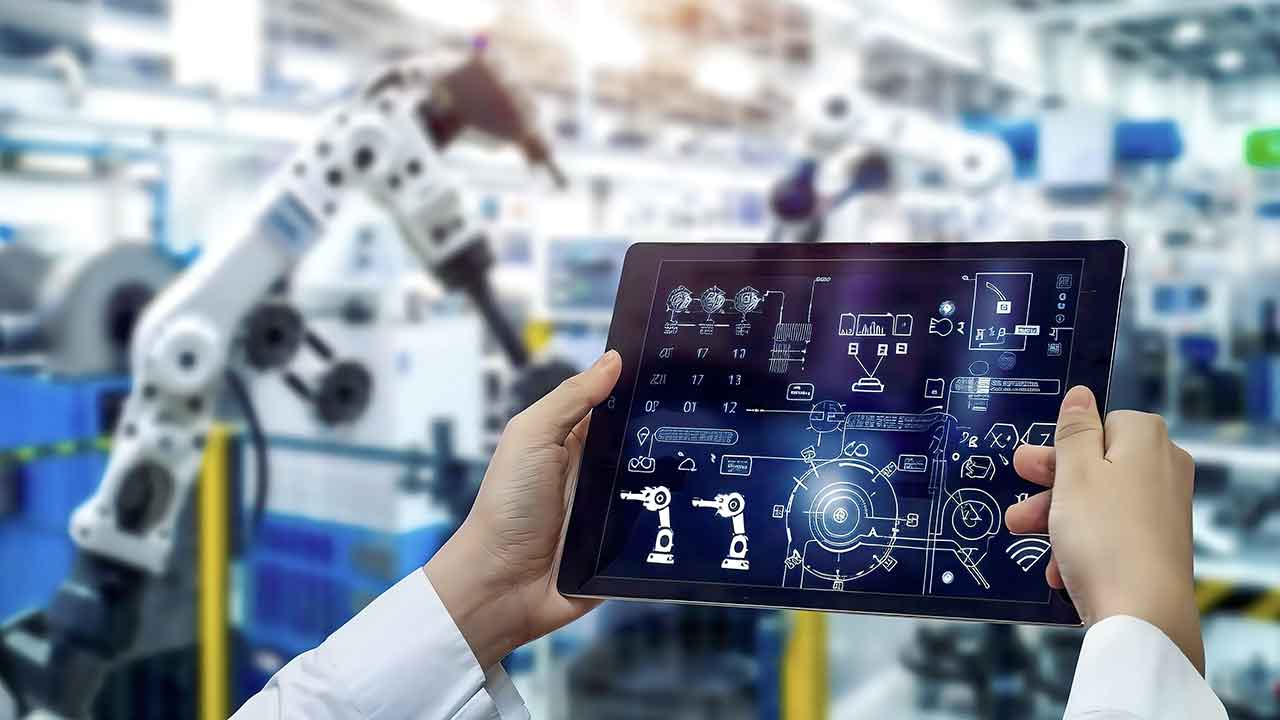

Edge Computing in the future and in the era of IoT is moving out of the realm of the abstract – spreadsheets, mobile apps, websites, and software-as-a-service platforms – and into the real world to power augmented reality, empower decision-making via AI and machine learning, operate cars, and increase public safety.

Edge computing – as a geographically distributed edge computing architecture – allows applications to execute closer to end-users, utilizing data, compute resources, networking, and infrastructure in near proximity, meeting demand from the explosive increase in the number of IoT & IIoT devices and the related complexity of the applications. Autonomous vehicles (land and air), smart cities, AR/VR, machine learning, neural networks. etc. are just a few edge computing use cases. This technology will be critical for many technological innovations that have yet to even be imagined.

Where is the Edge?

The most common question asked is: Where exactly is “the edge”? And it is a very fair question, but you would be hard-pressed to find any two executives that provide the same answer. Because the answer is: it depends. For a telecom company, it may be located at their network edge. Manufacturers may define it as the factory floor. Autonomous vehicles may define it as anywhere they can drive. And smart cities may just say “everywhere”. The varying perspectives and requirements for a distributed network means the physical locations of edge computing will continuously change. The difficulty stems from the expectation that the number of “things” is expected to grow from 20B in 2020 to 75B in 2025. It is nearly impossible to predict what those things may be, how and where they will operate, and what applications they will be running that require additional computing. We wrote an article about what intelligent edge is, in case you want to know more.

We think the best answer is that the Edge is located in the last 1,000 feet of every connected device on the planet.

Obviously, edge computing will never come close to serving the entire edge as defined above as it would be economically infeasible. But the important takeaway is that innovation regarding the residency of physical computing infrastructure will be critical for the success of edge computing.

Is Edge Computing Hype or Reality?

As reported by TechRepublic, Edge Computing is slated to skyrocket. There are various reasons why this is becoming a reality today. Some of these reasons include:

- New applications – and therefore future growth of IoT, data, etc. – will require lower latency that will best be served by edge computing and next-generation CDN providers.

- The proliferation of IoT devices is projected to reach trillions.

- Infrastructure is becoming highly automated as the cloud has become mainstream.

- Rise in automation across industries.

- Rise in multi-dimensional experiences across industries.

- The internet was created with the intention of decentralized infrastructure; centralized clouds are creating too much power in a few technology companies.

The race is on for the $4T edge computing economy. The number of IoT devices continues to proliferate and the amount of data being generated by these devices has been forecasted by IDC to increase from 18.9B Zettabytes in 2019 to 75.3B Zettabytes in 2025. If the past is any prediction of the future, the amount of edge data that will be generated is currently grossly underestimated.

What’s your opinion about this? Is it hype or reality?

This is an excerpt from this article written by Brad Kirby, COO at EDJX, and it was originally published on EDJX blog.

Related resources on edge computing on IIoT World website:

- Why edge computing is essential for the IIoT?

- Edge Computing – Insights from the Factory Floor

- Edge Computing Benefits – Key Drivers for Smart Manufacturing

- Top Reasons Why Edge Computing is Relevant for Industry 4.0