You installed the sensors. You bought the AI license. You hired the data scientist. Yet your million-dollar pilot is stuck, delivering quirky answers that no one on the floor dares to trust. The problem isn’t your algorithm. It’s the coffee-stained, handwritten maintenance log from 1998 that your new AI has no idea how to read.

In manufacturing, the data that matters most is often the hardest for AI to digest. While teams chase the latest large language model, they ignore the chaotic world of human-generated documents, shift notes, supplier scribbles, legacy CAD files, and scanned safety checks. This isn’t just data; it’s tribal knowledge, trapped in formats that break even the smartest systems. Feeding this mess to an AI guarantees one thing: failure.

The “Garbage In” Epidemic

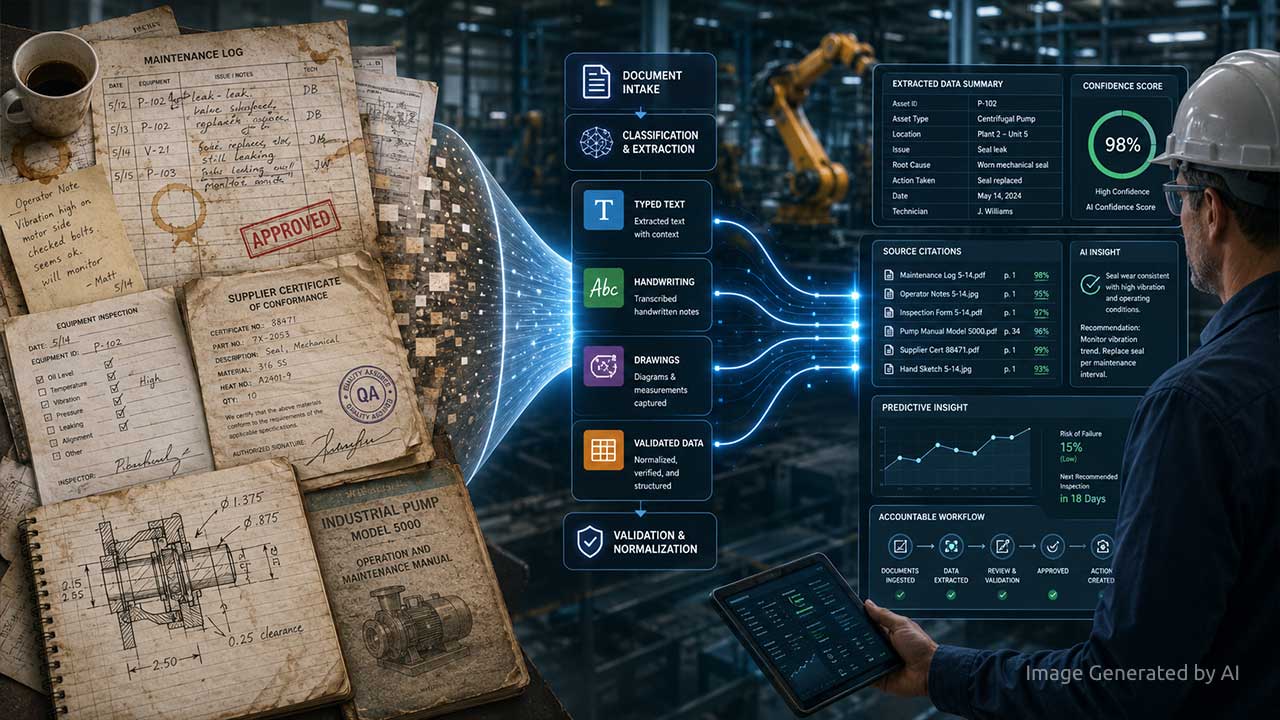

Consider a real-world test shown to manufacturing leaders. A single document, a poor-quality scan containing typed text, cursive handwriting, hand-drawn diagrams, and checkboxes, was fed directly to a top AI model. The result? The AI got nearly every critical field wrong, confidently inventing answers. The same document, when first broken down by a specialized pre-processor that separated text from handwriting from drawings, delivered perfect accuracy. The AI didn’t change. The input did.

This is the silent epidemic killing ROI. Your AI is drowning in garbage inputs. Maintenance reports, quality certificates, and equipment manuals were created for human eyes, not machines. They contain signatures, stamps, sketches, and faded text that generic AI cannot coherently parse. Deploying AI without a system to clean and structure these documents is like putting a Formula 1 engine in a car with square wheels.

The Fix is Boring (And That’s Why It Works)

The breakthrough isn’t a smarter AI. It’s a smarter intake process. The winning strategy uses a “pre-processing” layer that acts like an AI interpreter. It doesn’t replace your chosen model; it forces your documents to speak the model’s language. This layer performs a forensic analysis on every file: isolating handwritten notes for a handwriting expert AI, routing diagrams to a visual analysis tool, and sending typed text to a high-precision reader. It then validates the results against your business rules, like checking an extracted part number against your approved vendor list, before the data ever touches your main AI.

This is the essential plumbing that makes AI trustworthy. It attaches a “confidence score” and a source citation to every piece of data it extracts. When the AI later suggests a parts order, a supervisor can click to see the original supplier certificate, the confidence of the data pull, and the chain of custody. This turns AI from a mysterious black box into an accountable colleague.

Stop Chasing Models, Start Cleaning Data

The manufacturers winning with AI have stopped the endless search for a perfect algorithm. They’ve started with the document no one else wanted to tackle. They pick one high-impact use case, like automating the review of supplier quality certificates, and use it to build a clean, governed data pipeline. The result is often an immediate 30-50% cut in manual processing time and the recovery of thousands of engineering hours.

The lesson is counterintuitive: to advance, you must first look backward. Your competitive edge isn’t locked in a new AI API. It’s locked in your file room. The companies that dare to open those drawers and systematically convert their paper past into structured data are the ones building an AI foundation that won’t crumble on day one. Your most important AI project starts with the document you forgot existed.

Sponsored by Adlib Software.

This article is based on the IIoT World Manufacturing Day session, “Preparing Your Data Layer for AI-Driven Product and Supply-Chain Decisions,” sponsored by Adlib Software. Thank you to the speakers: Chris Huff (Adlib Software), Anthony Vigliotti (Adlib Software), Sabrina Joos (Siemens), and Hamish Mackenzie (New Space AI).

Frequently Asked Questions

1. Why do manufacturing AI pilots fail when reading documents?

Manufacturing AI often fails because algorithms are fed unstructured, human-generated documents like handwritten maintenance logs, quality certificates, and sketches. Generic AI models cannot reliably parse this messy data, leading to hallucinations and inaccurate outputs.

2. What is document pre-processing in industrial AI?

Document pre-processing is an intake layer that cleans and structures data before it reaches the main AI model. It uses specialized tools to separate and interpret typed text, handwriting, and visual diagrams, ensuring the AI only receives clean, validated data.

3. How does unstructured data affect manufacturing ROI?

Unstructured data traps critical “tribal knowledge” in unreadable formats. If not properly processed, it leads to failed AI pilots and lost engineering hours. Cleaning this data can cut manual processing time by 30% to 50% for tasks like supplier quality reviews.

4. How do you make legacy manufacturing data AI-ready?

The most effective method is implementing a governed data pipeline that systematically converts legacy paper records into structured data. A proper system attaches a confidence score and a source citation to every extracted data point, making the AI’s output fully accountable.